Photo by Brett Jordan on UnsplashHas a philanthropic strategy ever before become an identity? I'm confident that neither John D. Rockefeller nor Andrew Carnegie ever referred to themselves as scientific philanthropists - names which historians have applied to them. I've heard organizations tout their work as trust-based philanthropy, but yet to hear anyone refer to themselves that way. Same with strategic philanthropy. And even if you can find one or two people who call themselves "strategic" or "trust based" philanthropists, I'm confident you can't find me thousands.

Effective altruism, on the other hand, is all three - ideology, identity, and philanthropic approach.

Given the behavior of Sam Bankman-Fried and his pals at FTX, it's also a failed cover for fraud. But I digress.

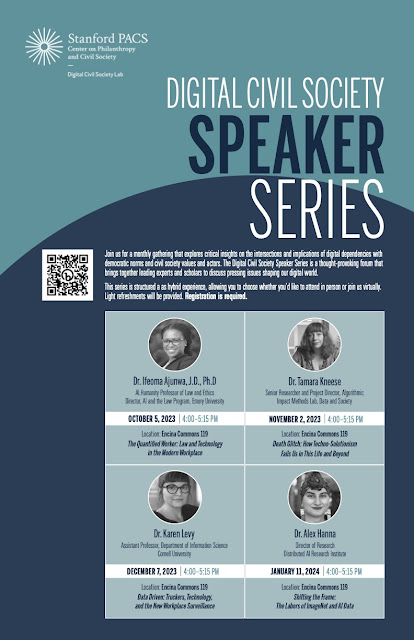

In the upcoming Blueprint24 (due out on December 15 - will be free and downloadable here) - I look at the role of Effective Altruism in the burgeoning universe of AI organizations. I had two hypotheses for doing so.

H1: There are 00s of new organizations focused on "trustworthy" or "safe" AI, but that behind them is a small group of people with strong connections between them.

H2: These organizations over-represent "hybrids" - organizations with many different forms and names, connected via a common group of founders/funders/employees - for some reason.

The Blueprint provides my findings on H1 and H2 (yes, but bigger than I thought, and yes, and I give three possible reasons) and will also make public the database of organizations, founders, and funders that a student built for me. So the weekend drama over at OpenAI certainly caught my attention.

By now, you've probably read about some of the drama at OpenAI. As you follow that story, keep in mind that at least two of the four board members who voted to oust the CEO are self-identified effective altruists, as is the guy who was just named interim CEO. These are board members of the 501 (c)(3) nonprofit OpenAI, Inc.

Effective Altruism's interests in AI run toward the potential for existential risk. This is the concern that AI will destroy humanity in some way. Effective altruists also bring a decidedly utilitarian philosophy to their work - to the point of having calculated things like the value of a "life year" and a "disability-affected life year" and use these calculations to inform their giving.*

The focus on existential threats leads to a couple of things in the real world in real time. First, it distracts from actual harms being done to real people right now. Second, the spectre of faraway harms isn't as motivating to action as it should be - see humanity's track record on climate change, pandemic prevention, inequality, etc. Pointing to the far away future is a sure way to weaken attention from regulators and ensure that the public doesn't prioritize protecting itself. Third, far away predictions require being able to argue how we get from now to then - which bakes in a bunch of steps and processes (often called path dependencies). Those path dependencies then ensure that what's being done today comes to seem like the only things we could possibly be doing.

Think of it like this: if I tell you we're going to get together on Thursday to give thanks and celebrate community. From this, we'd decide OK, we need to buy the turkey now. Once we have a turkey, we're going to have to cook it. Then we're going to have to eat it. Come Thursday, we will have turkey, regardless of anything else. We've set our direction and there's only path to Thursday.

But what if instead, I tell you we want to get together on Thursday to celebrate community and give thanks. But we want to make sure that everyone who we will invite has enough to eat from now until Thursday as well. We'd probably not buy a turkey at all. Instead, we'd spend our time checking in on each other's well-being and pantry situation, and if we found people without food we'd find them some. We can still get together on Thursday, comfortable in knowing that everyone has had their daily needs, met. In other words, if we focus on the things going wrong now we can fix those, without setting ourselves down a path of no return. And we still get to enjoy ourselves and give thanks on Thursday.**

The focus on long term harms allows for the very people who are building the systems to keep building them. They then model themselves as "heroic" for raising concerns while they simultaneously shape (and benefit from) the things they're doing now. Once their tools are embedded in our lives, we will be headed toward the future they portend, and it will be much harder to rid ourselves of the tools. The moment of greatest choice is now, before we head much further down any paths.

It's important to interrogate the values and aspirations of those who are designing AI systems, like the leadership of OpenAI. Not at a surface level, but more deeply. Dr. Timnit Gebru helps us do this through her work at DAIR, but also by doing some of the heavy lifting on what these folks believe. She provides us with an acronmyn, TESCREAL, to explain what's she found. TESCREAL (the bonus buzzword I promised) stands for "Transhumanism, Extropianism, Singularitarianism, Cosmism, Rationalism, Effective Altruism, and Longtermism." Listen here to hear Dr Gebru and Émile Torres discuss where these terms come from. And don't skip over the part about race and eugenics.

Effective Altruism is much more than a way to think about giving away one's money. It's an ideology that has become an identity. A self-professed identity. That reveals a power, an attraction in the approach that is unmatched, as far as I can tell, in the history of modern philanthropy. At the moment, this identity and ideology also seems to have a role in the development of AI that is far greater than many have realized. It's critical that we understand what they believe and what they're building.

*As someone with a newly acquired disability, I'd be curious about their estimation of the difference between a "life year" and a "disability-affected life year" if I wasn't already so repulsed by the idea of the value of either value.

**Agreed, not the best metaphor. But maybe it works, a little bit?